It turns out the data described in this post is used to determine ice and other terrestrial, atmospheric, and oceanic variables, not RSS data. To the best of my knowledge, raw RSS data isn't available online. See this post for more information.

=================

Well, so much for getting back to my physics code. There seems to be a hole in the sceptic community's understanding of raw UAH temperature data. I really think this hole needs to be filled and I think I'm going to have to be the one to fill it. I'm going to post the steps I'm taking along the way. This way there's a written trail for others in the community to follow if they want to. It'll also give anyone out there who's familiar with raw UAH temperature data the chance to tell me if I'm going off into the weeds, or if there's a better way to do what I'm doing. I'm hardly a "satellite raw data expert", so if someone out there sees me making a mistake, don't be shy pointing it out. :)

This post describes how to get the raw data, what format that data is in, and how to get a few utilities for working with the data. So, here we go...

UAH And RSS Data

First of all, what is UAH and RSS data? They're both satellite data, one comes from the University of Alabama, Huntsville (UAH) and the other comes from a company called Remote Sensing Systems (RSS). Both of these data sets are processed data derived from the same raw source. This source is NASA's Aqua satellite. You can read about this satellite and how UAH uses its data in this article at the Watts Up With That Blog.

So if we want the raw data for UAH and RSS, we want the data from the Aqua Satellite.

Getting Aqua Satellite Data

After doing some searching on the net, I found the raw data for the Aqua satellite at the National Snow and Ice Data Center (NSIDC) Order Form. Follow these steps to get the data you're looking for:

On the Order Data Screen, click the Data Pool link.

On the DataPool screen, click the AE_L2A.2 link. This contains the AMSR-E/Aqua global swath brightness temperatures data.

Now you're at the data selection screens. This is a group of screens that let you select the date and time of the data you want, as well as spatial and day/night data. NOTE: Daily data can range in size from 1 to 2.5 GB! So you're not going to want to download tons of data at a time. Stick to small date ranges, probably one or two days.

In this example, I'm interested in the last full day of data, which is currently 12 JAN 2010. At the calendar at the bottom of the screen, I click that date.

The next screen let's me pick the time of day I'm interested in. I'm interested in the entire day and I don't want to set any day/night or spatial options. So I'm ready to go and can move on to the download screen. I do this by clicking the Get the granules that match the above criteria link in the middle of the screen.

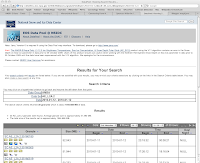

Now I'm at the screen showing the results of the search. At the bottom of this screen is a list of "granules" that matched my search. These granules are the data files to be downloaded. If you look at the top of the Results box at the bottom of the screen, you'll see that the first 10 granules out of 30 granules are being displayed. You'll also see controls for displaying the other granules. There are 30 granules in all and we want all 30.

We start downloading by right-clicking the link to each granule and selecting Download. You can see the links to the granules on the left side of the Results box at the bottom of the screen. The first link in this example is named SC:AE_L2A.2:35160180. There's a row for each granule. Download 10, then go to the 2nd screen, download 10 more, then go to the 3rd screen and download the last 10.

Congratulations, you now have all the raw Aqua data for Jan 12, 2010.

Aqua Satellite Data Format

The Aqua Satellite data files are in a format known as Hierarchical Data Format - Earth Observing System (HDF-EOS). These files contain a mix of binary and text data. The text data is in a hierarchical format that is custom designed by NASA. There's a page at the NSIDC web site that describes this data. It says:

Hierarchical Data Format (HDF) is the standard data format for all NASA Earth Observing System (EOS) data products. HDF is a multi-object file format developed by The HDF Group.

The HDF Group developed HDF to assist users in the transfer and manipulation of scientific data across diverse operating systems and computer platforms, using FORTRAN and C calling interfaces and utilities. HDF supports a variety of data types: n-Dimensional scientific data arrays, tables, text annotations, several types of raster images and their associated color palettes, and metadata. The HDF library contains interfaces for storing and retrieving these data types in either compressed or uncompressed formats.

For each data object in an HDF file, predefined tags identify the type, amount, and dimensions of the data; and the file location of various objects. The self-describing capability of HDF files helps users to fully understand the file's structure and contents from the information stored in the file itself. A program interprets and identifies tag types in an HDF file and processes the corresponding data. A single HDF file can also accommodate different data types, such as symbolic, numerical, and graphical data; however, raster images and multidimensional arrays are often not geolocated. Because many earth science data structures need to be geolocated, The HDF Group developed the HDF-EOS format with additional conventions and data types for HDF files.

HDF-EOS supports three geospatial data types: grid, point, and swath, providing uniform access to diverse data types in a geospatial context. The HDF-EOS software library allows a user to query or subset the contents of a file by earth coordinates and time if there is a spatial dimension in the data. Tools that process standard HDF files also read HDF-EOS files; however, standard HDF library calls cannot access geolocation data, time data, and product metadata as easily as with HDF-EOS library calls.

From a computer programmer's point of view, we've got a custom data format that is extremely flexible but is going to be a bit of a pain to parse. Luckily, NASA provides some tools to make it easier to work with the data.

Tools

There's a page at the NSIDC web site that provides links to a few HDF-EOS tools. One of these tools converts HDF-EOS files to ASCII. I believe this is the tool we're going to want to use.

The tool is called ncdump, and it has its own web page. On that page you'll find instructions for installing and using ncdump on UNIX and Windows platforms. I'm not going to repeat those instructions here. Just follow them and you'll have ncdump as well as several other tools installed on your system, ready to use.

Note that if you're asked for a user ID and password for FTPing the files to your computer, log on to FTP as an anonymous user (Guest) and no password.

Wrapping Up

That's all I'm going to cover for this post. At this point you know about UAH and RSS raw data from the Aqua Satellite. You know where to get that data and how to download it to your computer. And you have a few tools for working with the data.

This is as far as I've gone with the process so far. There's a lot here and I wanted to stop and document the steps I've taken before moving on.

References:

How the UAH Global Temperatures Are Produced

National Snow and Ice Data Center AMSR-E Aqua Data Order Form

NSIDC Introduction to HDF-EOS

NSIDC HDF-EOS tools

NSIDC ncdump Tool Web Page

No comments:

Post a Comment